@Zhuocheng Xu

‣

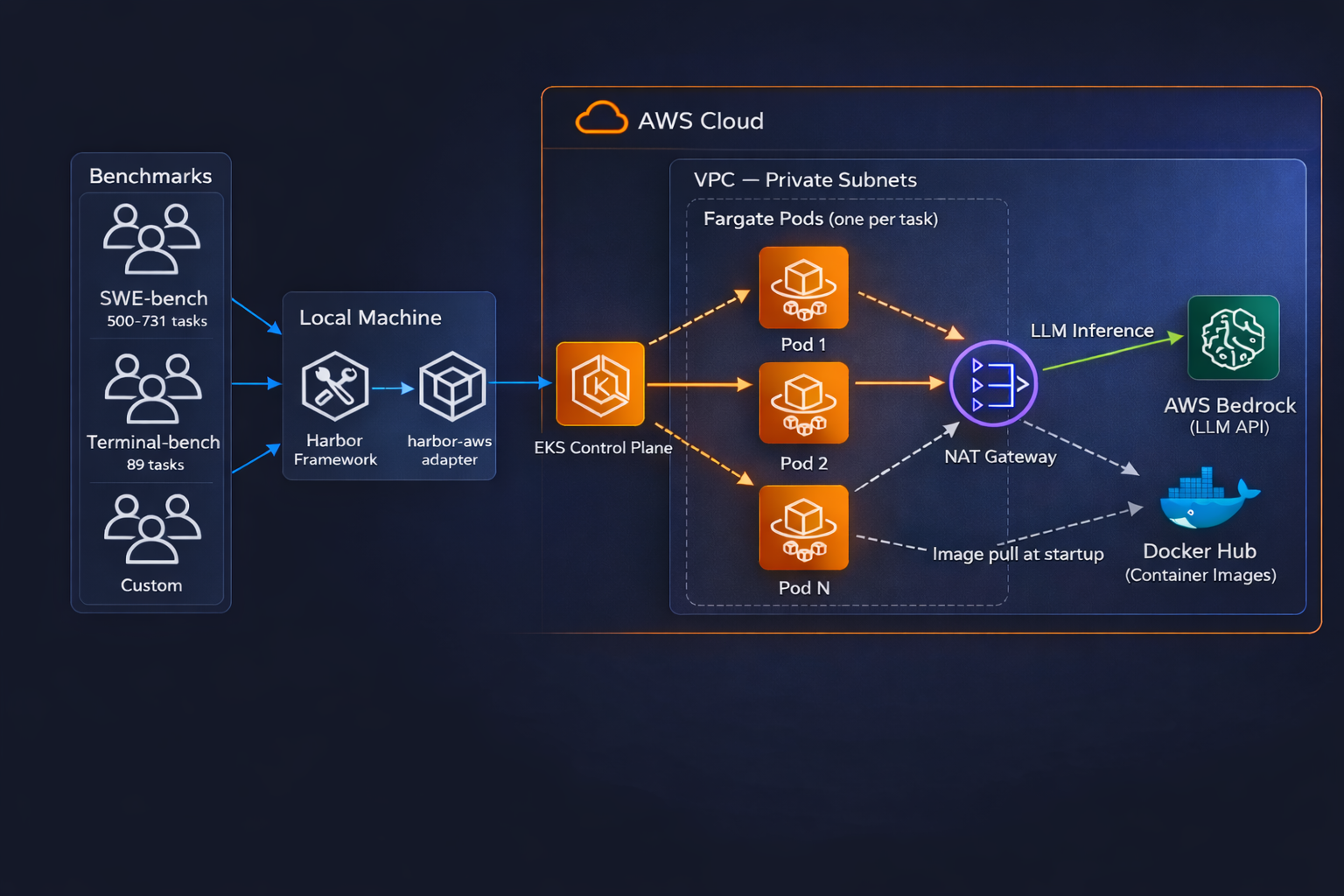

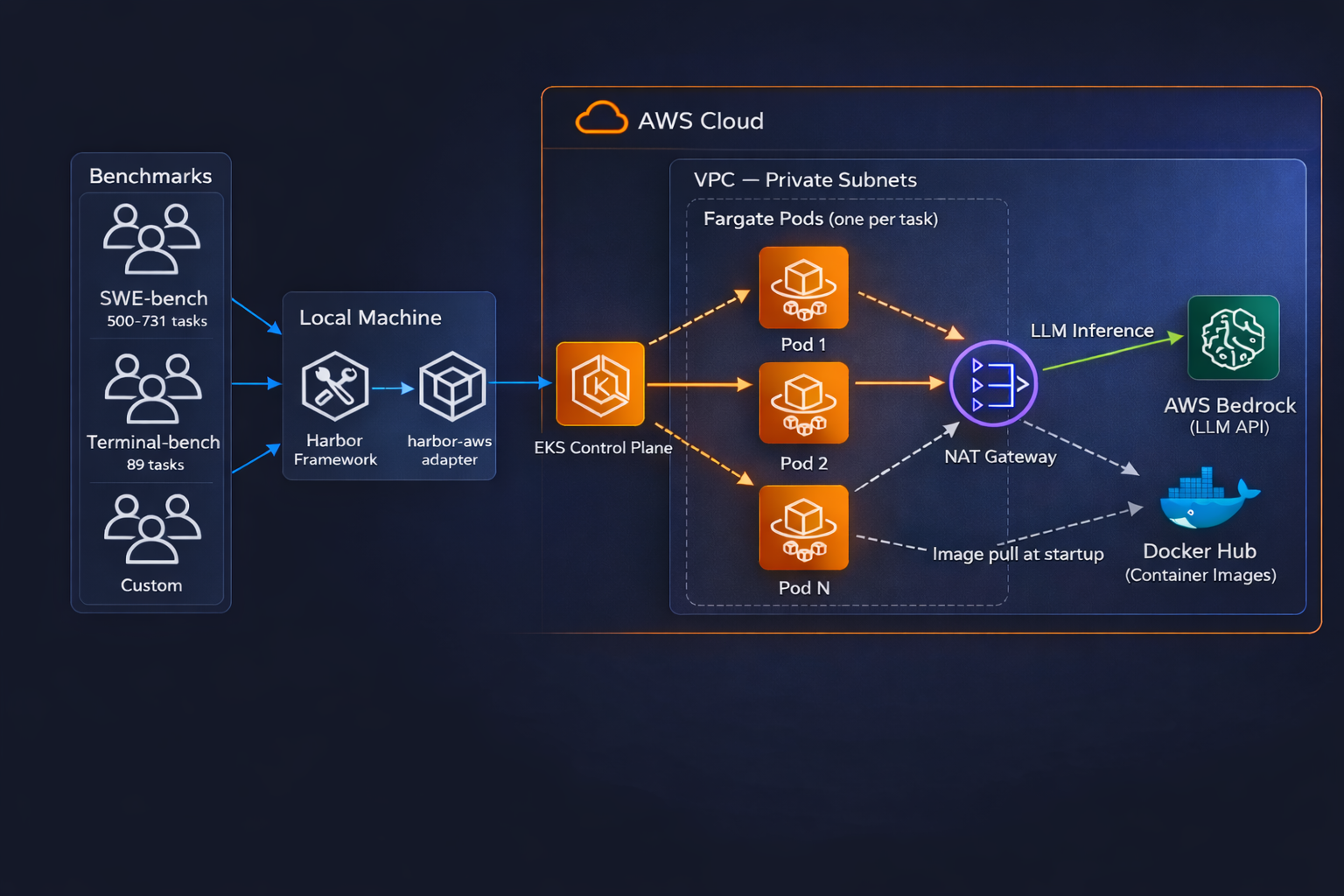

Harbor-AWS is an execution backend for Harbor that runs benchmark tasks as ephemeral pods on EKS Fargate. It targets workloads such as SWE-bench and Terminal-bench, where runs consist of many mostly independent tasks and where each task benefits from an isolated execution environment. The design emphasizes simple infrastructure lifecycle management, pay-on-demand compute, and high task-level parallelism.

This document describes the architecture, identifies the main mechanisms affecting concurrency and failure handling, and discusses the system’s advantages and limitations directly.

Harbor provides a clean and extensible abstraction for running agent benchmarks. It manages task orchestration, Docker-based sandboxing, and result collection, while supporting a growing registry of benchmarks such as Terminal-Bench, CompileBench, and others. Local execution is sufficient for small-scale experiments and provides a straightforward workflow for development and testing.

Its primary limitation lies in scalability. Many benchmark tasks are computationally and operationally heavy: each task runs in an isolated container and may require code compilation, model training, or server configuration. Consequently, local execution is often slow, and parallelism is constrained by the limited resources of a single machine. This workload profile makes cloud infrastructure a natural deployment target.

Yet Harbor currently lacks native AWS support. As a result, researchers who wish to run benchmarks on AWS must provision and configure the underlying infrastructure themselves, and also determine how Harbor should be deployed and scaled in that environment. This creates substantial setup overhead and increases the risk of inefficiency and misconfiguration.

To address this gap, Harbor-AWS simplifies both infrastructure lifecycle management and benchmark execution on AWS. It makes it easy to provision and tear down the required infrastructure, while also providing Harbor with a preconfigured, profiled AWS backend that allows users to run benchmarks at high concurrency with minimal manual setup.

Harbor-AWS runs each benchmark task as an isolated pod on EKS Fargate. Four design choices define the system.

One pod per task. Each task is assigned its own Kubernetes pod. The orchestrator creates the pod, stages files, runs the workload, collects results, and deletes the pod afterward. Isolation is therefore structural: tasks do not share a runtime environment. Concurrency is expressed directly as the number of active pods.

No in-container daemon. The system interacts with containers through Kubernetes exec for command execution and tar-over-exec for file transfer, the same primitives underlying kubectl exec and kubectl cp. As a result, benchmark images can run without modification. The tradeoff is that all interaction flows through Kubernetes control-plane paths.